Most companies have a quiet graveyard of AI projects.

Somewhere in some forgotten folder, the decks for a “smart customer assistant,” an “intelligent contract analyzer,” or an “autonomous support agent” are still sitting there, once promising, now abandoned after the pilot phase.

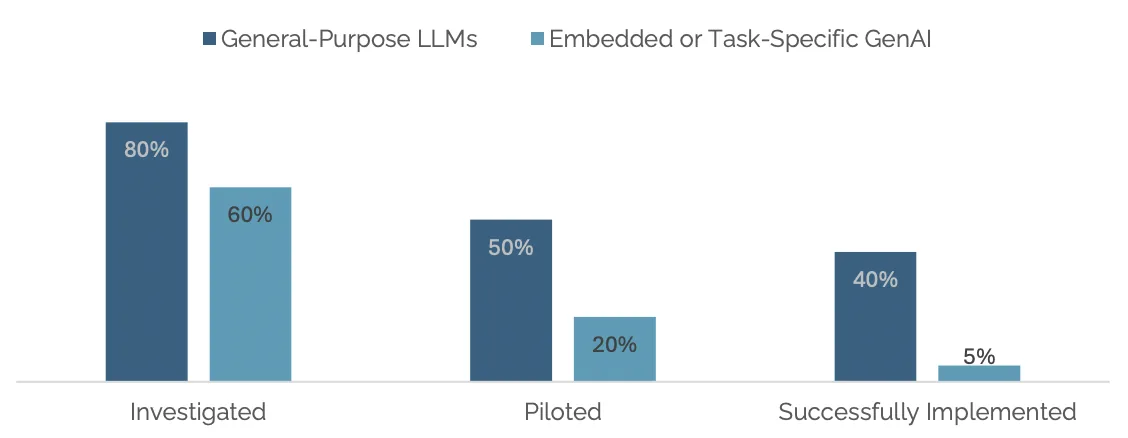

This is not anecdotal. MIT’s Project NANDA found that despite tens of billions of dollars invested in generative AI, 95% of organizations see no measurable return from their projects, with only 5% of integrated pilots generating real P&L impact.

Source: MIT NANDA

RAND Corporation research confirms that AI projects fail at twice the rate of traditional IT projects, with over 80% never reaching meaningful production use.

The numbers get sharper when you zoom in on agentic AI specifically.

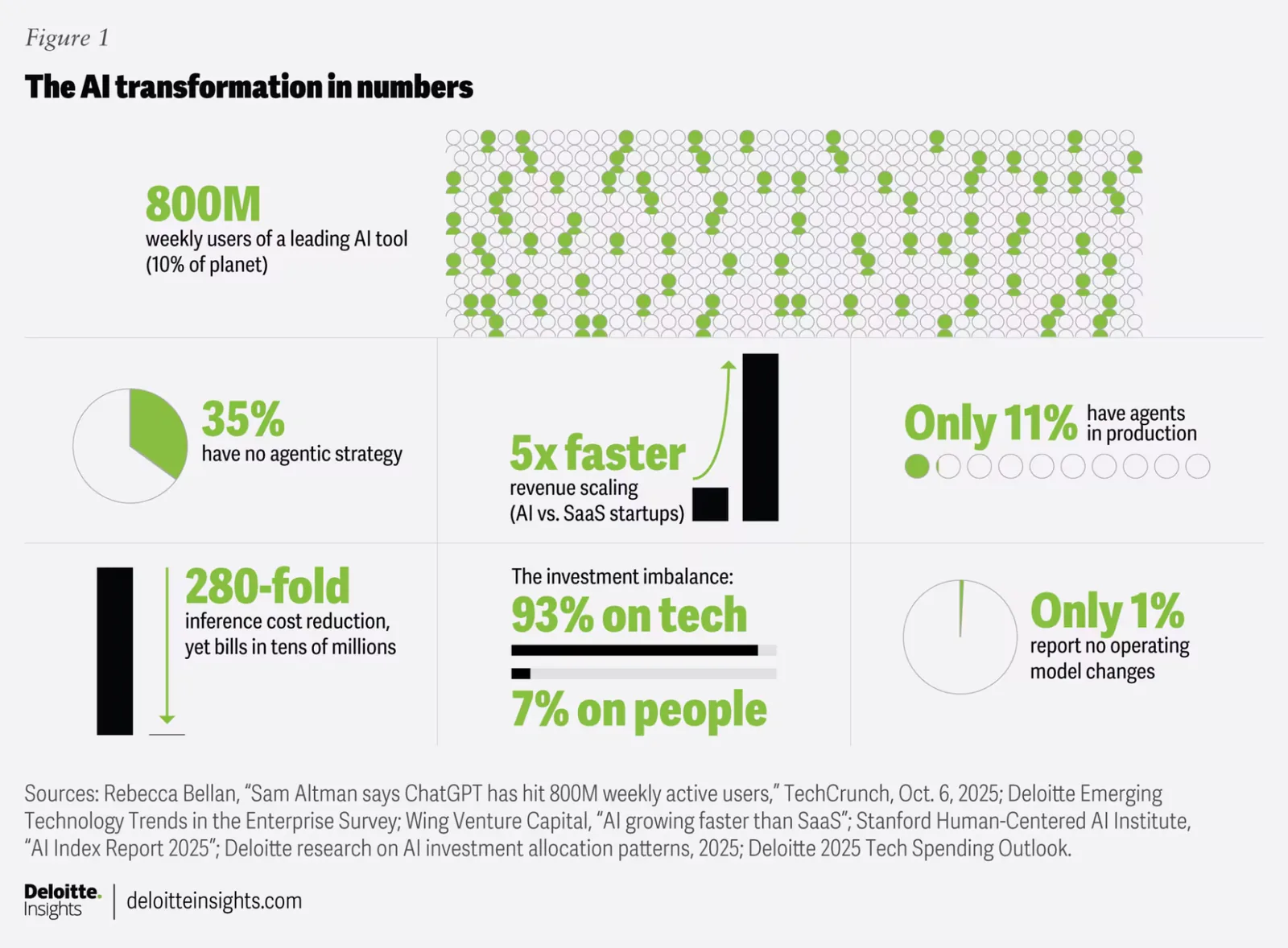

Gartner predicts that over 40% of agentic AI projects will be cancelled by the end of 2027, citing escalating costs, unclear business value, and inadequate risk controls. Deloitte’s research puts the number of production-ready agentic implementations at just 14%, with only 11% actively in use today.

Source: Deloitte

And McKinsey’s late-2025 survey found that while 88% of enterprises are experimenting with AI, just 6% qualify as “high performers” capturing significant business value.

Those gaps between those trying and those succeeding are not a small rounding error. They represent billions of dollars in engineering time, vendor contracts, and leadership attention that produced pilots, demos, and proof-of-concepts that never crossed into real use.

So, the question worth asking is not whether agentic AI works. It does, in the right conditions. The question is why so many organizations are building those conditions wrong, and what the ones who are getting it right are doing differently.

That’s what this piece explores. Keep reading.

The Gap Between Pilot and Production Is Not a Technology Problem

Most organizations experiencing agentic AI failures are not failing because they chose the wrong model or the wrong vendor. They are failing because they are treating technology as the solution rather than the tool, and skipping the organizational and infrastructure work that makes agents reliable in production.

The same Deloitte study found that 42% of organizations are still developing their agentic AI strategy roadmap, and 35% have no formal strategy at all.

That means most enterprises currently investing in agentic AI do not yet have a clear picture of where it should go, what success looks like, or what governance it requires.

See it this way: they are building the plane while flying it, and a significant portion are finding out too late that the runway was not long enough.

The differentiator between a proof-of-concept that impresses in a demo and a production-grade agent that reliably handles real enterprise work is not the sophistication of the underlying model. It’s whether the infrastructure, data, and governance surrounding the agent were built to support autonomous decision-making at scale.

There is also a compounding mathematical problem with agentic systems that most organizations underestimate. If an AI agent achieves 80% accuracy per action, a 10-step workflow only succeeds about 11% of the time.

In other words, errors compound across steps rather than averaging out. What looks acceptable in a single-step task becomes a reliability crisis in any workflow requiring real autonomy.

5 Reasons Projects Stall Before They Ship

When you look across the deployment data and the industry analysis, you’ll find that most failures cluster around these five recognizable patterns:

- The use case problem

- The legacy system problem

- The culture problem

- The data problem

- The governance problem

Let’s have a look at each.

The Use Case Problem

One of the most consistent findings across failed deployments is that organizations selected use cases based on what was technically interesting rather than what would deliver measurable business value.

“Many use cases positioned as agentic today don’t require agentic implementations. Most agentic AI projects right now are early stage experiments or proof of concepts that are mostly driven by hype and are often misapplied.”

— Anushree Verma, Senior Director Analyst at Gartner

Building an autonomous agent to handle a workflow that a simpler automation tool could manage just as well is not progress. It’s simply expensive complexity in search of a justification.

The fix is deceptively simple but rarely done rigorously: define the expected outcome in measurable terms before a single line of code is written. Not “improve customer service” but “reduce average resolution time from X hours to Y hours while maintaining Z% accuracy.”

That precision is what separates a project that can be evaluated honestly from one that gets quietly shut down because nobody agreed on what success looked like.

The Legacy System Problem

Most enterprise systems were designed for humans to use, not for autonomous agents to operate within.

That’s because interpreting ambiguous outputs depends on human judgment, information is presented in formats built for reading and contextual understanding, and exceptions are handled by escalating decisions to someone who can step in and make the call.

So when an autonomous agent encounters a system built for human interpretation, it either breaks, makes a bad decision with confidence, or gets stuck and requires intervention — which defeats the purpose of deploying it in the first place.

Plus, bringing agents into legacy environments is rarely straightforward, typically introducing technical complexity, interrupting established workflows, and requiring expensive changes that organizations didn’t anticipate.

The Culture Problem

Organizations focus heavily on the technology and the infrastructure and underinvest in the human side of adoption.

That’s a problem, because culture is the real force multiplier. Even the most advanced tools fall short if teams don’t trust them or understand how to use them effectively.

When employees aren’t involved in shaping agent-driven workflows, that trust never forms, and any unexpected output quickly becomes a reason to work around the system instead of relying on it.

Organizations that succeed with agentic AI take a different approach. They involve end users from the outset, design agents as collaborators rather than replacements, and build transparency into how the system works so people can clearly see what the agent is doing and why.

When that’s missing, the outcome isn’t just lower efficiency — it’s systems that people actively resist using.

The Data Problem

An agent is only as reliable as the information it can access and reason from. Gartner predicts that through 2026, organizations will abandon 60% of AI projects that are unsupported by AI-ready data.

The issue is not that organizations lack data; they have enormous amounts of it. Rather, it’s that the data is siloed, inconsistent, and structured for reporting rather than for continuous autonomous decision-making.

In the Deloitte survey, nearly half of organizations cited searchability of data and reusability of data as challenges to their AI automation strategy.

The implication is clear: investing in a sophisticated agent before building a data foundation capable of supporting it is an expensive way to learn that the foundation was not there.

The Governance Problem

For some companies, more generative AI spending goes to demonstration and experimentation, while the governance, audit, and oversight infrastructure gets treated as a post-deployment problem. For agentic AI specifically, this sequencing is a serious risk.

Unlike a chatbot that gives a wrong answer, an autonomous agent acts on wrong information. When a large language model produces a hallucination inside a chatbot, the result is a wrong answer.

When it happens inside an agent, the result is an action taken on false data, possibly without leaving a trace of how the decision was made. In financial services or healthcare, that can lead to serious compliance and liability problems.

Governance for agentic AI — which means defining what the agent can do, what it must escalate, what gets logged, and what gets audited — needs to come first, not after deployment.

The Agent Washing Problem

There is another layer to all of this that makes the decision harder for organizations trying to do it right. A significant portion of what is currently marketed as agentic AI is not actually agentic AI.

Gartner estimates that only around 130 of the thousands of vendors currently marketing agentic AI products offer genuine capabilities. The rest are engaging in what the industry has started calling agent washing: rebranding existing chatbots, robotic process automation tools, and basic automation as agentic AI without adding the planning, reasoning, and autonomous execution capabilities that actually define the category.

For enterprise teams, this creates a real problem. You cannot govern something that isn’t clearly defined.

When vendors label any AI-looking system as “agentic,” it blurs the lines between what systems can actually do and what they claim to do. That makes it harder to compare tools, set expectations with leadership, and put the right controls in place.

Organizations experiencing agentic AI failure after investing in washed products were never running genuine agentic AI to begin with. They were running over-marketed automation and expecting autonomous results.

The first step to building the right foundation is making sure what is being built on is actually what it claims to be.

What the Organizations Actually Succeeding Are Doing Differently

The pattern across successful deployments is consistent, and it is less about the technology than about the sequencing.

Here are some quick pointers:

- They start with a specific, bounded workflow that has clear success criteria and a meaningful business outcome attached to it. That precision is what makes it possible to know whether an agent would actually work in production.

- They treat data infrastructure as a prerequisite rather than an integration task to handle after deployment. Successful agentic AI depends on building it in as a first-class architectural component, rather than leaving it as an integration challenge to solve later.

- They build governance, audit trails, escalation paths, and access controls into the system from day one rather than retrofitting them after an incident. Building that layer after the agent is already deployed is significantly harder and more expensive than building it first.

- They involve end users early in the design process rather than presenting a finished system and expecting adoption. This ensures the system reflects real workflows and reduces resistance when it is rolled out.

- They start smaller than they think they need to, prove value in a limited scope, and expand from a foundation that actually works. Projects that try to automate entire complex workflows from day one typically fail due to too many variables and potential failure points.

At the end, think of agentic AI like bringing on a new employee, not installing software.

That means planning for training, iteration, and ongoing improvement, setting clear metrics before anything is built, and designing for failure from the start.

These habits are not complicated, but they do require a level of discipline that most teams still haven’t built into how they adopt AI.

How GAP Helps Organizations Build the Foundation First

The difference between failed and successful agentic AI projects comes down to timing and readiness. The pattern is consistent: organizations that rush into implementation struggle, while those that take the time to build the foundation first see real results.

GAP’s Validate:AI is built specifically for this challenge. Rather than asking organizations to commit to full implementation before they have validated what actually works in their environment, it provides a structured path from proof of concept to production, with each stage grounded in measurable business outcomes and designed to surface infrastructure, data, and governance gaps before they become expensive production failures.

GAP’s AI Acceleration Workshops help engineering and leadership teams align on where agentic AI genuinely applies, what the right scope looks like, and what organizational foundations need to be in place before agents are deployed.

For teams that need deeper engineering capacity to build those foundations, GAP brings expertise in data engineering and MLOps practices that most internal teams cannot sustain alone while also shipping products.

The goal is not to slow down agentic AI adoption. It’s to make sure the 40% cancellation rate is not something your organization contributes to.

Talk to a GAP expert about where to start.