On February 3, 2025, Andrej Karpathy, co-founder of OpenAI and former Director of AI at Tesla, posted on X about a new way of building software he had been experimenting with.

Source: X

He described a workflow where you “fully give in to the vibes, embrace exponentials, and forget that the code even exists.” In other words, you prompt, accept, and run. If something breaks, you paste the error back in and let the model fix it, without needing to read the code.

He termed this Vibe Coding.

By the end of 2025, Collins Dictionary had named “vibe coding” its word of the year.

Source: Collins

The term had moved from a throwaway tweet to something with its own Wikipedia entry, its own tooling ecosystem, and its own cultural moment.

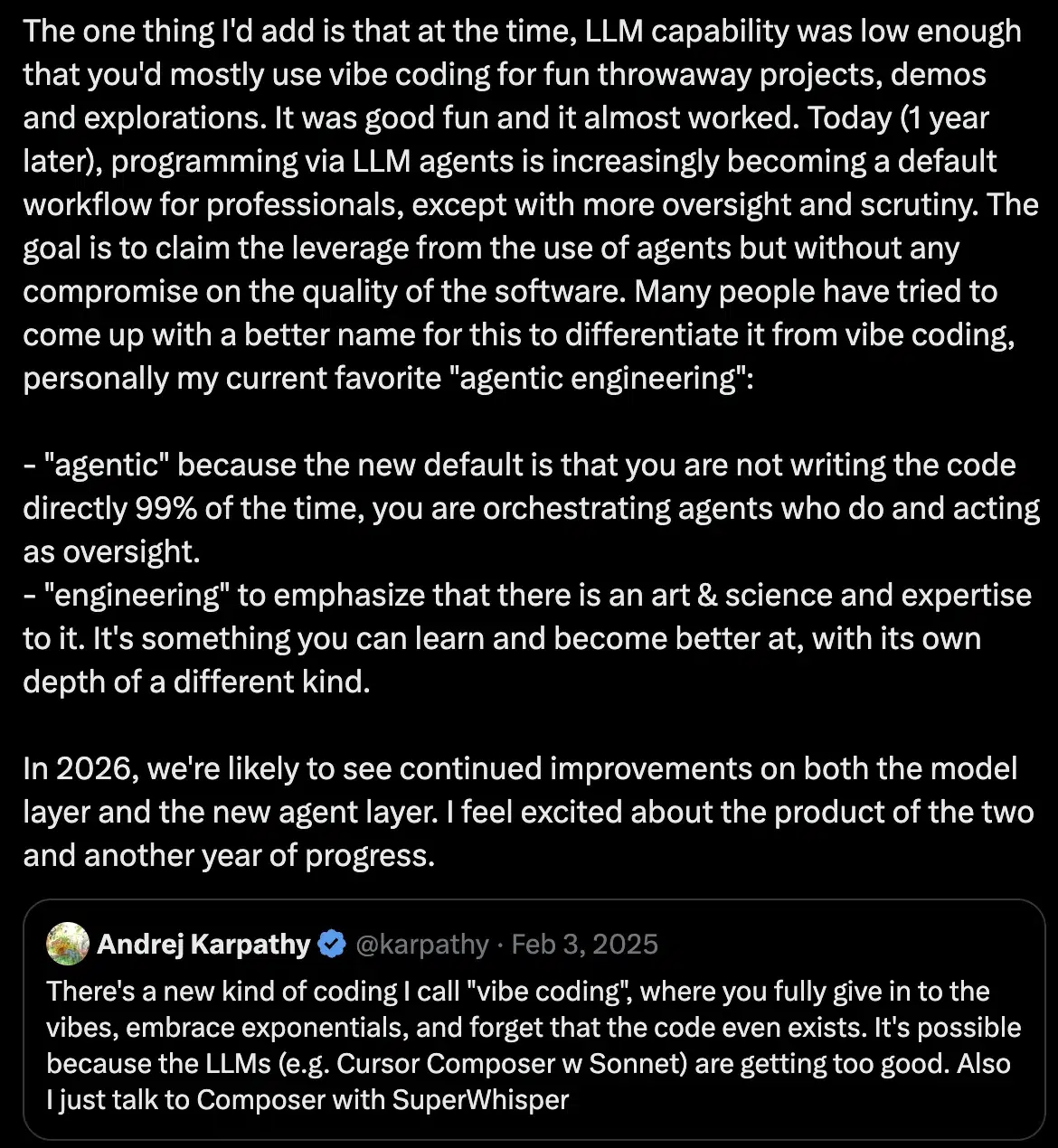

Then, exactly one year later, Karpathy posted again. He acknowledged what vibe coding had become and introduced the term he now preferred: agentic engineering.

In this piece, we’ll break down what vibe coding and agentic engineering actually mean, and unpack the difference between them.

For engineering and technology leaders, this shift is worth understanding as AI reshapes software development at a structural level.

What Is Vibe Coding?

Vibe coding is simply an approach to software development where a developer describes what they want in natural language and lets an AI model generate the implementation, largely without reviewing or deeply understanding the code that comes back.

Coined by Karpathy in February 2025, it stuck because it named something that was already happening across the developer community — a growing number of engineers and non-engineers alike were spending more time describing intent than writing code.

In a typical vibe coding workflow, a developer opens a tool like Cursor, Claude Code, or Replit, describes the feature or fix they need, accepts the generated output, runs it to see if it works, and pastes any errors back into the prompt for the model to resolve. The process repeats until something functional exists.

Karpathy described accepting changes without reading diffs, working around bugs he could not fix, and building projects where the code grew beyond his usual comprehension. His point was not that this was best practice, rather it mostly worked for the kinds of things he was building at the time.

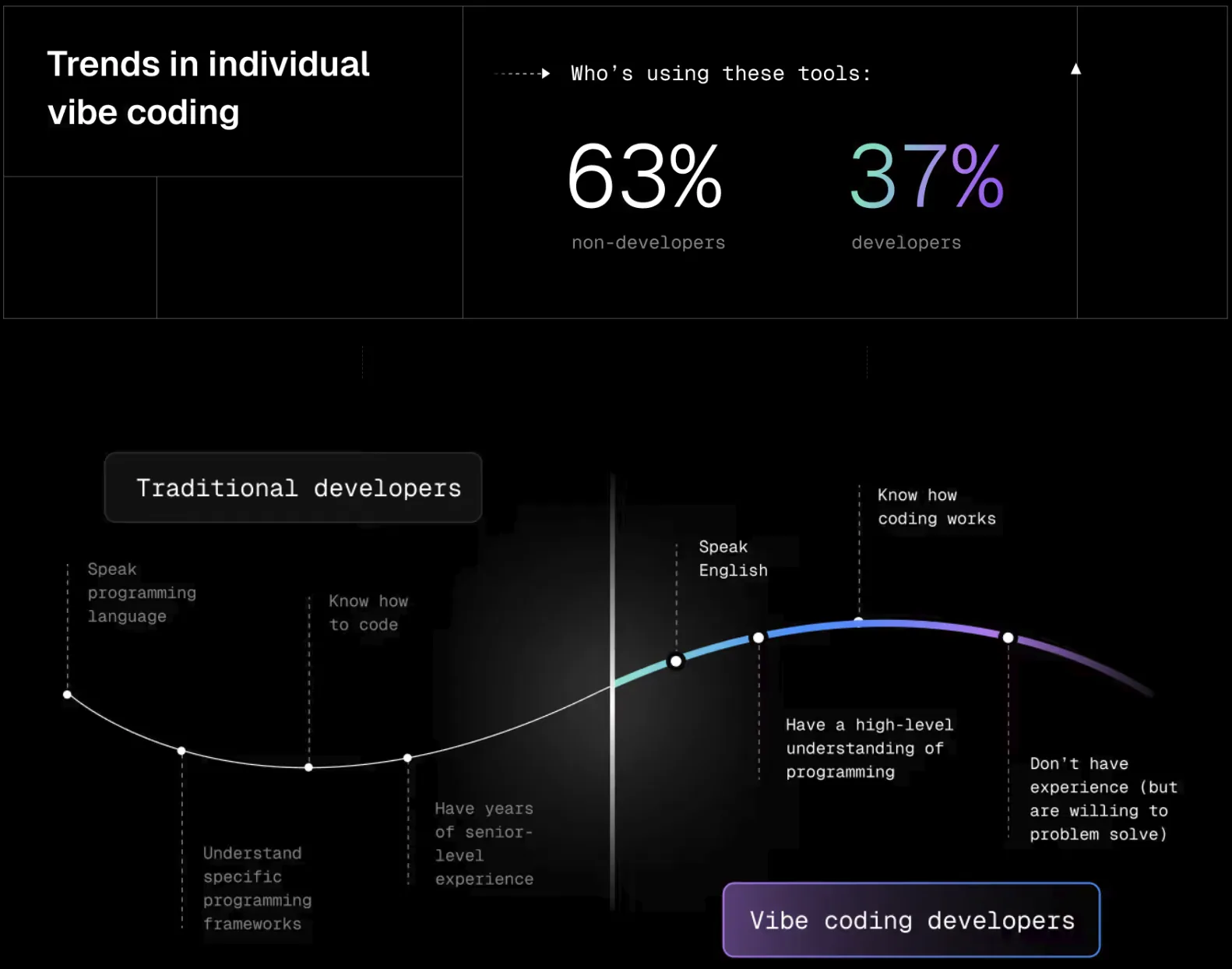

The community latched onto vibe coding for real reasons. It dramatically lowered the barrier to building software. By mid-2025, 63% of active vibe coders were non-developers. They were people who had ideas and finally had a practical path to executing them.

Source: Vercel

For prototypes, MVPs, and exploratory projects where speed matters most and production-grade quality is not yet the goal, the approach delivered genuine value.

Where vibe coding starts to break down is when the code it produces needs to be maintained, extended, audited, or relied upon by real users. Since no one reviewed it, no one owns it in a meaningful sense, and no one can fully explain what it does or why.

That gap, between generating code and engineering software, is exactly what the move to agentic engineering is meant to address.

What Is Agentic Engineering?

On February 4, 2026, exactly one year after his original tweet, Karpathy quoted his previous tweet.

Source: X

In that tweet, he acknowledged what vibe coding had turned to, and then reframed the conversation with a new term: Agentic Engineering.

“Agentic” because the developer is no longer writing code directly most of the time; they are orchestrating AI agents who do, and acting as the oversight layer on top of that.

“Engineering” because skill, expertise, and discipline are still central to the work. It is something you can learn, practice, and get better at.

That combination is what separates it from vibe coding, and the distinction matters more than it might initially seem.

In practice, agentic engineering starts before any prompt is written. The engineer writes a design document or specification, sometimes with AI assistance, defines the architecture, and breaks the work into well-scoped tasks.

The AI agent then handles implementation within those boundaries. Every diff gets reviewed with the same rigor you would apply to a teammate’s pull request. The engineer remains accountable for what gets built throughout the entire process, not just at the end.

As Addy Osmani, Director at Google Cloud AI puts it:

“Vibe coding = YOLO (You Only Live Once). Agentic engineering = AI does the implementation, human owns the architecture, quality, and correctness.”

— Addy Osmani, Director at Google Cloud AI

That line captures the practical difference more cleanly than most formal definitions.

Source: Addy Osmani

Vibe Coding vs. Agentic Engineering: The Core Differences

That brings us to the difference between vibe coding and agentic engineering.

Here are some key distinctions:

| Aspect | Vibe Coding | Agentic Engineering |

| Starting point | A prompt describing what you want | A written spec or design document |

| Developer role | Prompt driver, accepts AI output | Architect and reviewer, directs agents |

| Code review | Minimal or none | Rigorous review of every change |

| Testing | Optional, often skipped | Required, tests run before merging |

| Accountability | Diffuse, code is not fully understood | Clear, engineer owns architecture and correctness |

| Auditability | Limited or none | Full trace of decisions and changes |

| Best suited for | Prototypes, MVPs, personal projects | Production systems, enterprise codebases |

| Risk profile | High for production use | Managed through oversight and process |

The table above captures the structural differences, but the more important distinction is cultural.

Vibe coding defers quality costs to the future. You get speed now and pay with technical debt, security vulnerabilities, and maintenance burden later. Agentic engineering pays those costs upfront through spec writing, structured review, and test coverage, which means the leverage it creates is sustainable rather than borrowed.

As one analysis put it, the legal reality is also shifting in 2026: financial losses caused by black-box algorithms are becoming a genuine liability, and agentic engineering provides the digital provenance to address that.

There is a clear record of which agent generated the code, which human reviewed it, and which specification it was built to satisfy. Vibe coding produces none of that, and as AI-generated code moves deeper into enterprise production environments, that gap is becoming a compliance issue rather than just a quality concern.

What the Data Actually Shows

The appeal of vibe coding was real, and the early numbers reflected that.

A quarter of YC’s Winter 2025 batch had codebases that were 95% AI-generated, and teams were shipping prototypes faster than they ever had. The productivity narrative was compelling.

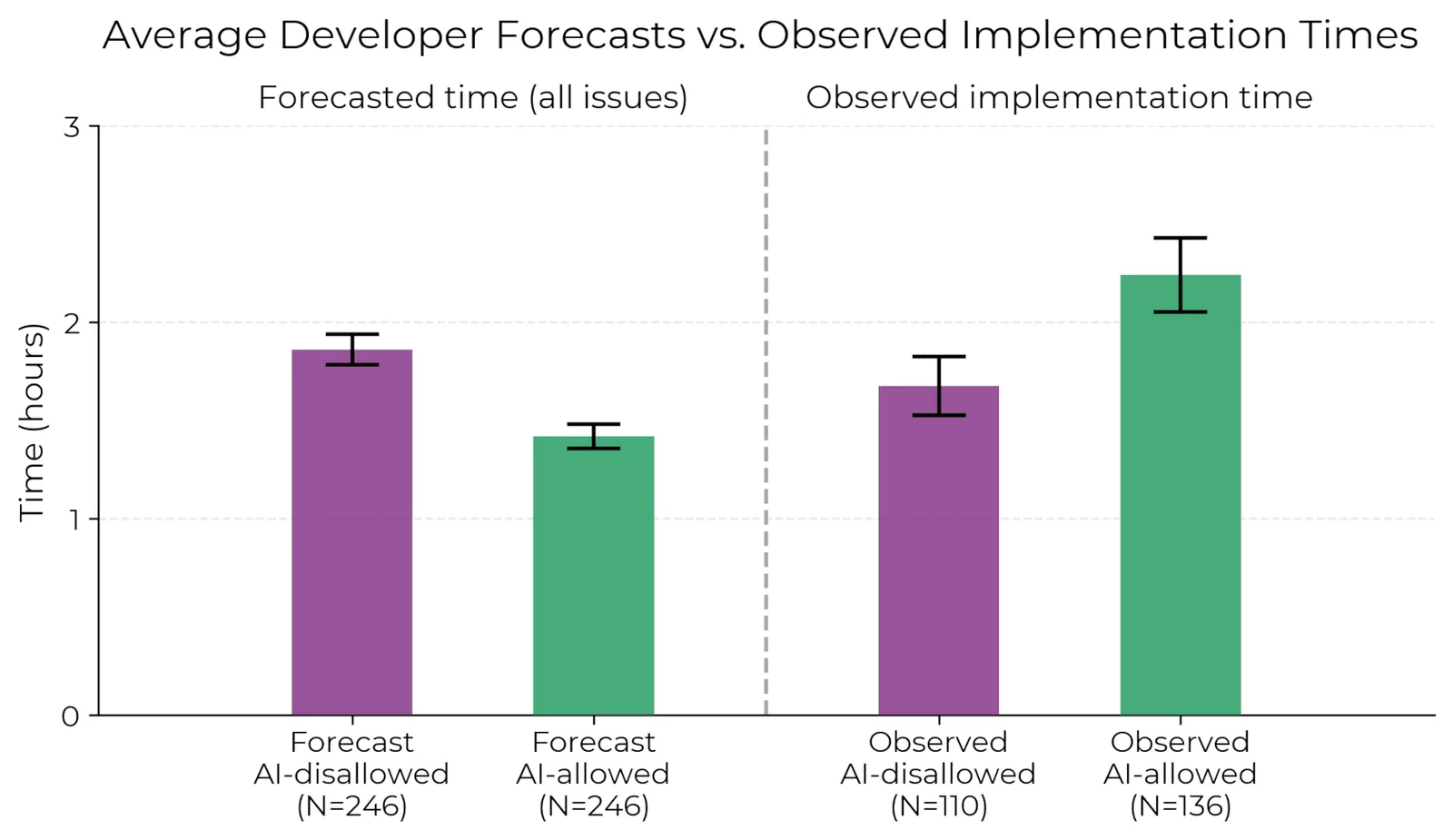

The research that followed told a more complicated story.

A Veracode study in 2025 analyzed over 100 large language models across 80 coding tasks and found that 45% of AI-generated code introduced security vulnerabilities, many of them critical flaws including those on the OWASP Top 10.

The quality of generated code had improved significantly over the years, but the security of that code had shown essentially no improvement alongside it.

A randomized controlled trial by METR found that experienced open-source developers were actually 19% slower when using AI coding tools, despite predicting beforehand that they would be 24% faster and still believing afterward that they had been faster. The subjective feeling of velocity was masking a measurable deterioration in actual outcomes.

Source: METR

By September 2025, the developer community had a name for what was happening: the vibe coding hangover. Senior engineers were describing AI-generated codebases as a kind of development hell, technically functional but barely comprehensible, loosely structured, resistant to modification, and prone to cascading failures when anyone tried to extend them.

The emerging concept that captures the longer-term cost is cognitive debt, the accumulated burden of poorly managed AI interactions, context loss, and unreliable agent behavior in production systems.

Where technical debt describes code that needs to be rewritten, cognitive debt describes systems that nobody fully understands, where changes are made cautiously and incidents take longer to diagnose because the foundational comprehension simply is not there.

Organizations that moved fast with vibe coding are now dealing with this in their incident rates, support queues, and the time their most experienced engineers spend deciphering code nobody owns.

What This Means for Your Engineering Team

The vibe coding versus agentic engineering distinction has direct implications for how technical leaders think about team structure, hiring, and capability development, not just which tools to approve.

For senior engineers, data architects, ML practitioners, and technical directors with strong foundational knowledge, agentic engineering is a genuine force multiplier.

The judgment built over years of designing systems, recognizing failure patterns early, and understanding what good architecture looks like gets amplified when that experience is directed toward orchestrating AI agents rather than writing boilerplate. The work shifts toward higher-leverage decisions, which is where that expertise was always most valuable.

For developers earlier in their careers, the picture is more nuanced. Using AI-generated code without deeply comprehending its functionality is a real risk, not just to the product, but to the developer’s own growth.

Vibe coding in the hands of someone still building their engineering foundations can produce functional output without developing the understanding needed to maintain, debug, or reason about that output under pressure.

Agentic engineering, practiced with genuine rigor, can still be a strong learning environment for junior engineers, but only if the review culture and mentorship exist to make the process meaningful rather than just another way to ship code faster.

The team-level implication is that the shift toward agentic engineering changes what senior engineering judgment looks like day to day. The value is in writing good specs, making sound architectural decisions, and reviewing AI output with real depth, not in raw lines of code produced.

That changes hiring profiles, onboarding expectations, and how you measure engineering effectiveness. Teams that have not yet thought through those changes are likely running agentic workflows on top of vibe coding culture, which is where a lot of the cognitive debt originates.

How GAP Helps Engineering Teams Make the Transition

Understanding the difference between vibe coding and agentic engineering is the starting point, not the finish line.

Stack Overflow’s 2025 Developer Survey found that 84% of respondents use or intend to use AI-assisted programming.

Source: Stack Overflow

This means that the question for most technical leaders is not whether AI is in their development workflow but whether the practices around it reflect genuine engineering discipline. And whether the team has the capacity to sustain that discipline at scale.

Most internal teams cannot build and maintain that foundation alone while also shipping products. The testing pipelines, agent configuration standards, governance frameworks, and review culture that make agentic engineering work in practice require sustained depth that takes time and expertise to develop. That is where GAP comes in.

GAP’s AI Acceleration Workshops are designed for engineering organizations at every stage of AI maturity, from teams still figuring out where AI fits in their workflows to those looking to scale existing pilots into production-grade systems.

We assess where the genuine capability gaps are, help organizations define governance policies that function under real conditions rather than just on paper, and build the technical foundation that makes agentic workflows sustainable at scale.

For teams that need validation before committing to a full implementation, Validate:AI offers a structured, evidence-based path from proof of concept to production.

Rather than asking teams to make large upfront investments in unproven approaches, it breaks AI implementation into manageable stages, each designed to answer specific questions and build on the results of the last.

GAP’s team of AI engineering specialists identifies high-impact use cases tied directly to business goals, pilots solutions under controlled conditions, and helps organizations build the organizational readiness to support what they are building technically.

Across both offerings, GAP brings specialists in data science, data engineering, MLOps, and AI architecture, along with a nearshore delivery model that provides the sustained engineering capacity most internal teams cannot staff alone.

If your team is working through this transition and wants a direct conversation about where to start, talk to a GAP expert.