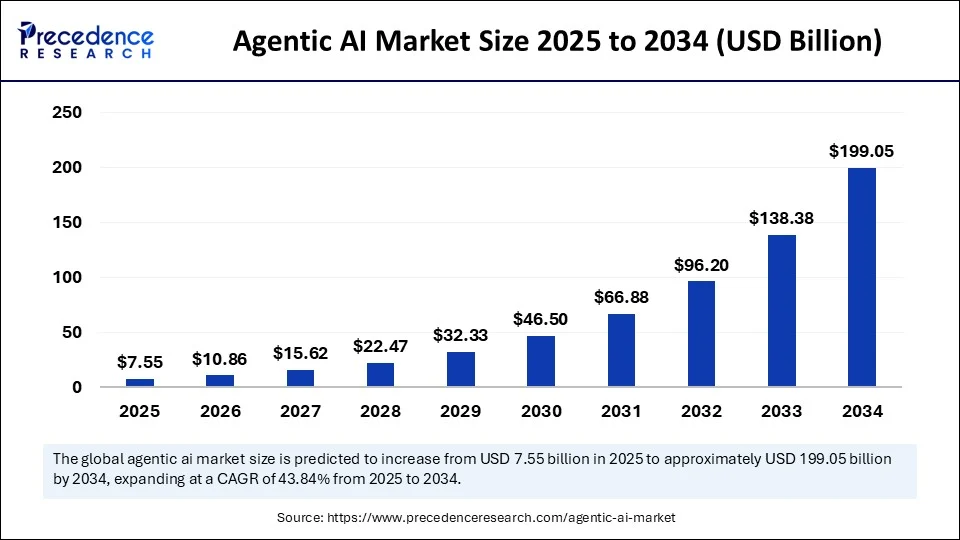

On paper, agentic AI looks like a runaway success. 88% of executives are increasing their AI budgets in 2026 specifically to invest in agentic AI. The market is exploding from $7.55 billion in 2025 to a projected $199 billion by 2034.

Source: Precedence Research

Companies everywhere are racing to deploy autonomous agents that can handle customer support, process invoices, route leads, and manage workflows without human intervention.

Yet Gartner predicts over 40% of these agentic AI projects will be canceled by the end of 2027. The reason isn’t the technology. It’s the foundation, or rather lack of one.

Companies are building autonomous systems on top of disconnected data, undefined processes, and workflows that were never designed for AI. When you try to automate something that doesn’t work well manually, you just get faster failure.

So before you spin up another proof of concept or commit to a six-figure implementation, ask yourself: Is your workflow actually ready for an AI agent?

Why AI Agent Projects Are Failing

The failure pattern is consistent across industries, with the same sequence playing out again and again.

For example, think of a situation where a company identifies a high-value use case, let’s say customer support ticket triage. They pilot an agent, it performs well in testing, and then it goes to production. Within weeks, the agent starts routing tickets incorrectly, escalating cases that should be resolved, or missing urgent requests entirely.

It’s easy to throw the blame on AI as the problem, but the issue usually isn’t the model itself. The problem comes from the underlying workflow, which often depends on tribal knowledge, inconsistent data entry, or manual workarounds that the agent cannot see.

Here’s what happens when foundations aren’t in place:

- Agents underperform because they lack context. They can’t access the systems they need, or the data they pull is incomplete, outdated, or contradictory. A sales agent recommending outreach to a contact who changed roles six months ago loses credibility fast.

- Projects stall in proof-of-concept purgatory. Only 48% of AI projects make it into production, and it takes an average of 8 months to go from prototype to deployment. That’s not because the technology is immature, but because the workflow isn’t ready to be automated.

- ROI never materializes. 42% of AI initiatives failed in 2025 with no measurable return. Most of these failures trace back to unclear business value or workflows that weren’t properly assessed before automation began.

The pattern is clear. Operational readiness determines whether an AI agent succeeds or becomes another canceled project.

How to Tell If Your Workflow Is Agent-Ready

Not every workflow should be automated, and not every automation needs to be agentic.

The best candidates for AI agents share specific characteristics that make them reliable, measurable, and valuable to automate.

High-volume, repetitive tasks

If your team processes hundreds or thousands of similar requests every week, for example, support tickets, expense approvals, form submissions, data entry, etc., you’ve got a strong candidate. Agents excel at handling volume without fatigue, error accumulation, or quality degradation.

Clear decision logic

Can you write down the rules? If a request comes in, what determines the next step? Agents work best when the workflow has defined criteria, consistent routing logic, and predictable outcomes. If your process depends heavily on “you just know when you see it,” you’re not ready yet.

Structured or semi-structured data

Agents need data they can parse reliably. Forms with consistent fields, tickets with standardized categories, transactions with clear metadata all work. Handwritten notes, unstructured emails with variable formats, or conversations requiring deep interpretation are harder.

Recoverable outcomes

Start with workflows where errors are visible and fixable. An agent that miscategorizes a support ticket? Your team can catch and correct it. An agent that incorrectly approves a contract introduces financial and legal risk. Build confidence with lower-risk use cases before escalating to mission-critical decisions.

Measurable results

What does success look like? Time saved? Cost reduction? Faster resolution? Higher accuracy? If you can’t define the metric, you can’t prove ROI, and without ROI, the project gets canceled when budgets tighten.

Identifying the Right Use Cases

Good use cases for agentic workflows have a few things in common. They’re annoying to humans, easy to measure, and clearly beneficial when automated.

Examples of good use cases include:

- Tagging and categorizing knowledge base articles

- Form data entry from PDFs or scanned documents into structured systems

- User access provisioning and deprovisioning based on role or status changes

- Expense report approvals following documented policy rules

- Invoice processing and matching against purchase orders

Examples of bad use cases include:

- Any workflow with unclear success criteria or undefined processes

- Strategic planning, contract negotiation, or high-stakes decision-making

- Processes that change frequently without documentation

- Workflows that rely on extensive human judgment or contextual nuance

When evaluating potential use cases, prioritize based on four factors:

| Factor | Key Questions |

| Business impact | How much time or cost would automation save? Does it unlock revenue or improve customer experience? |

| Technical readiness | Is the data accessible, clean, and structured? Can we integrate the systems the agent needs? |

| User adoption | Will the team actually use it, or will they work around it? Do they trust the output enough to act on it? |

| Risk level | What happens if the agent gets it wrong? Can we catch and fix errors before they cause damage? |

If a use case scores high on business impact and technical readiness but low on risk, start there. Build momentum, prove ROI, and earn the credibility to tackle harder problems.

What Changes When a Workflow Becomes Agentic

Traditional automation follows scripts, as in ‘if this happens, do that’. Robotic process automation (RPA) tools excel at this—logging into systems, copying data, clicking buttons in sequence. But they break when anything changes.

Agentic workflows are different. Agents don’t just execute steps; they perceive, reason, and adapt. They can handle variability, make decisions based on context, and recover when something unexpected happens.

This shift changes how you think about automation. Instead of scripting every possible scenario, you define the objective and let the agent figure out how to achieve it. Instead of hardcoding decision trees, you provide context and let the agent reason through the options.

But this flexibility comes with a tradeoff: agents are non-deterministic. The same input might produce slightly different outputs depending on how the model interprets context.

For workflows where consistency is critical, such as financial reconciliation, regulatory compliance, and legal approvals, you need guardrails, validation layers, and human oversight.

Fixing the Data Challenge

Most companies don’t have one clean, centralized database.

Instead, data is spread across a patchwork of systems, with CRM records in Salesforce, financial data in NetSuite, support tickets in Zendesk, customer interactions in Intercom, legacy information in SQL Server, and product data sitting in spreadsheets.

For humans, this is manageable. We context-switch between systems, mentally reconcile differences, and fill in gaps with institutional knowledge. But for AI agents, this is a nightmare.

Agents need a virtualized, unified view of your data. They can’t log into five different systems, correlate records manually, and make judgment calls about which version of the truth is correct.

They need data that’s:

- Federated: Connected across systems without requiring manual consolidation. Agents should be able to query customer data, pull transaction history, check inventory levels, and access support tickets from a single interface.

- Normalized: Consistent in format, structure, and meaning. If one system stores dates as MM/DD/YYYY and another uses YYYY-MM-DD, the agent needs a layer that standardizes the format.

- Integrated: Updated in real time or near-real time. Agents making decisions on stale data produce bad outcomes. If a customer canceled their subscription this morning, your agent shouldn’t be sending renewal offers this afternoon.

This is where most AI projects stall. Little wonder, Gartner points out that 63% of organizations don’t have or are unsure if they have the right data management practices for AI. 60% of AI projects lacking proper data foundations will be abandoned by 2026.

Hence, building this foundation isn’t optional, but mandatory prerequisite work. And it’s not glamorous. No one gets excited about data normalization, schema mapping, or API integration.

But without it, your agents will hallucinate, recommend incorrect actions, and erode trust faster than you can rebuild it.

How GAP Helps You Build the Foundation

At GAP, we’ve spent over 30 years helping companies modernize legacy systems and build the infrastructure that AI needs to succeed.

Our AI Acceleration Workshops are structured around three stages: Establish, Identify, and Enable, helping teams align on goals, evaluate feasibility, and move toward implementation with clarity.

We also have our Validate:AI—proof-of-concept programs that test whether your workflows, data, and systems can actually support agentic AI. This isn’t a vendor pitch where we assume the answer is yes. We assess honestly, identify gaps, and help you fix them before you waste money on production deployments that won’t work.

Behind this work are GAP’s data engineering teams who specialize in building the pipelines, federation layers, and integration architecture that agents need to operate reliably.

We work in LatAm time zones, deliver with our 2NABOX model (two leaders—one US-based, one LatAm-based—fully accountable for outcomes), and bring significant cost savings compared to US-based hiring.

Most importantly, we’ve seen what works and what fails. We know the difference between hype-driven experimentation and disciplined implementation.

If you’re serious about agentic workflows, we’ll help you assess readiness, build the foundation, and deploy systems that actually work.

Get started with a free AI readiness calculator and find out whether your workflows are ready for agents, or what you need to fix first.